Key Takeaways

- AI-generated pictures are more durable to identify

- AI detection instruments exist, however are underused

- Artists’ participation is essential in stopping misuse

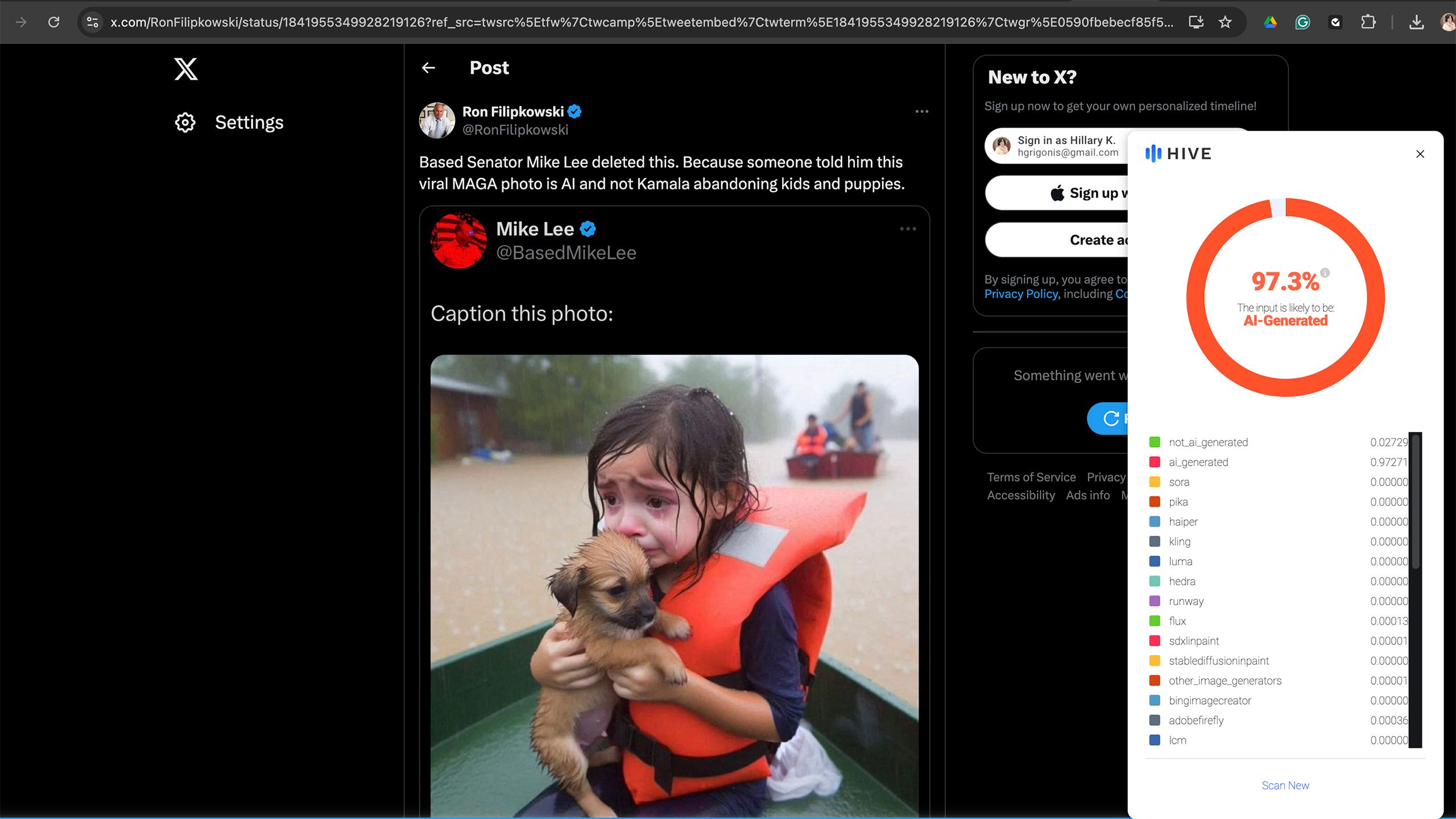

Within the wake of the devastation attributable to Hurricane Helene, a picture depicting somewhat woman crying whereas clinging to a pet on a ship in a flooded avenue went viral as an outline of the storm’s devastation. The issue? The woman (and her pet) don’t really exist. The picture is one among many AI-generated depictionsflooding social media within the aftermath of the storms. The picture brings up a key situation within the age of AI: is rising sooner than the know-how used to flag and label such pictures.

Several politicians shared the non-existent girl and her puppy on social media in criticism of the present administration and but, that misappropriated use of AI is likely one of the extra innocuous examples. In spite of everything, as the deadliest hurricane in the U.S. since 2017, Helene’s destruction has been photographed by many precise photojournalists, from striking images of families fleeing in floodwaters to a tipped American flag underwater.

However, AI pictures meant to create misinformation are readily turning into a difficulty. A study published earlier this year by Google, Duke College, and a number of fact-checking organizations discovered that AI didn’t account for a lot of faux information photographs till 2023 however now take up a “sizable fraction of all misinformation-associated images.” From the pope sporting a puffer jacket to an imaginary woman fleeing a hurricane, AI is an more and more straightforward strategy to create false pictures and video to assist in perpetuating misinformation.

Utilizing know-how to combat know-how is vital to recognizing and finally stopping synthetic imagery from attaining viral standing. The difficulty is that the technological safeguards are rising at a a lot slower tempo than AI itself. Fb, for instance, labels AI content material constructed utilizing Meta AI in addition to when it detects content material generated from outdoors platforms. However, the tiny label is much from foolproof and doesn’t work on all forms of AI-generated content material. The Content material Authenticity Initiative, a corporation that features many leaders within the business together with Adobe, is creating promising tech that would go away the creator’s info intact even in a screenshot. Nonetheless, the Initiative was organized in 2019 and most of the instruments are nonetheless in beta and require the creator to take part.

The picture brings up a key situation within the age of AI:

Generative AI

is rising sooner than the know-how used to flag and label such pictures.

Associated

Some Apple Intelligence features may not arrive until March 2025

The primary Apple Intelligence options are coming however a number of the finest ones may nonetheless be months away.

AI-generated pictures have gotten more durable to acknowledge as such

The higher generative AI turns into, the more durable it’s to identify a faux

I first noticed the hurricane woman in my Fb information feed, and whereas Meta is placing in a higher effort to label AI than X, which permits customers to generate pictures of recognizable political figures, the picture didn’t include a warning label. X later noted the photo as AI in a group remark. Nonetheless, I knew straight away that the picture was doubtless AI generated, as actual individuals have pores, the place AI pictures nonetheless are inclined to battle with issues like texture.

AI know-how is rapidly recognizing and compensating for its personal shortcomings, nevertheless. When I tried X’s Grok 2, I used to be startled at not simply the power to generate recognizable individuals, however that, in lots of circumstances, these “individuals” had been so detailed that some even had pores and pores and skin texture. As generative AI advances, these synthetic graphics will solely grow to be more durable to acknowledge.

Associated

Political deepfakes and 5 other shocking images X’s Grok AI shouldn’t be able to make

The previous Twitter’s new AI device is being criticized for lax restrictions.

Many social media customers do not take the time to vet the supply earlier than hitting that share button

Whereas AI detection instruments are arguably rising at a a lot slower fee, such instruments do exist. For instance, the Hive AI detector, a plugin that I’ve installed on Chrome on my laptop computer, acknowledged the hurricane woman as 97.3 p.c more likely to be AI-generated. The difficulty is that these instruments take effort and time to make use of. A majority of social media shopping is finished on smartphones fairly than laptops and desktops, and, even when I made a decision to make use of a cell browser fairly than the Fb app, Chrome doesn’t enable such plugins on its cell variant.

For AI detection instruments to take advantage of important influence, they have to be each embedded into the instruments customers already use and have widespread participation from the apps and platforms used most. If AI detection takes little to no effort, then I imagine we may see extra widespread use. Fb is making an attempt with its AI label — although I do suppose it must be rather more noticeable and higher at detecting all forms of AI-generated content material.

The widespread participation will doubtless be the trickiest to realize. X, for instance, has prided itself on creating the Grok AI with a free ethical code. The platform that’s very doubtless attracting a big share of customers to its paid subscription for lax moral pointers corresponding to the power to generate pictures of politicians and celebrities has little or no financial incentive to hitch forces with these preventing in opposition to the misuse of AI. Even AI platforms with restrictions in place aren’t foolproof, as a study from the Center for Countering Digital Hate was successful in bypassing these restrictions to create election-related pictures 41 p.c of the time utilizing Midjourney, ChatGPT Plus, Stability.ai DreamStudio and Microsoft Picture Creator.

If the AI firms themselves labored to correctly label AI, then these safeguards may launch at a a lot sooner fee. This is applicable to not simply picture era, however textual content as effectively, as ChatGPT is engaged on a watermark as a strategy to help educators in recognizing college students that took AI shortcuts.

Associated

Adobe’s new AI tools will make your next creative project a breeze

At Adobe Max, the corporate introduced a number of new generative AI instruments for Photoshop and Premiere Professional.

Artist participation can be key

Correct attribution and AI-scraping prevention may assist incentivize artists to take part

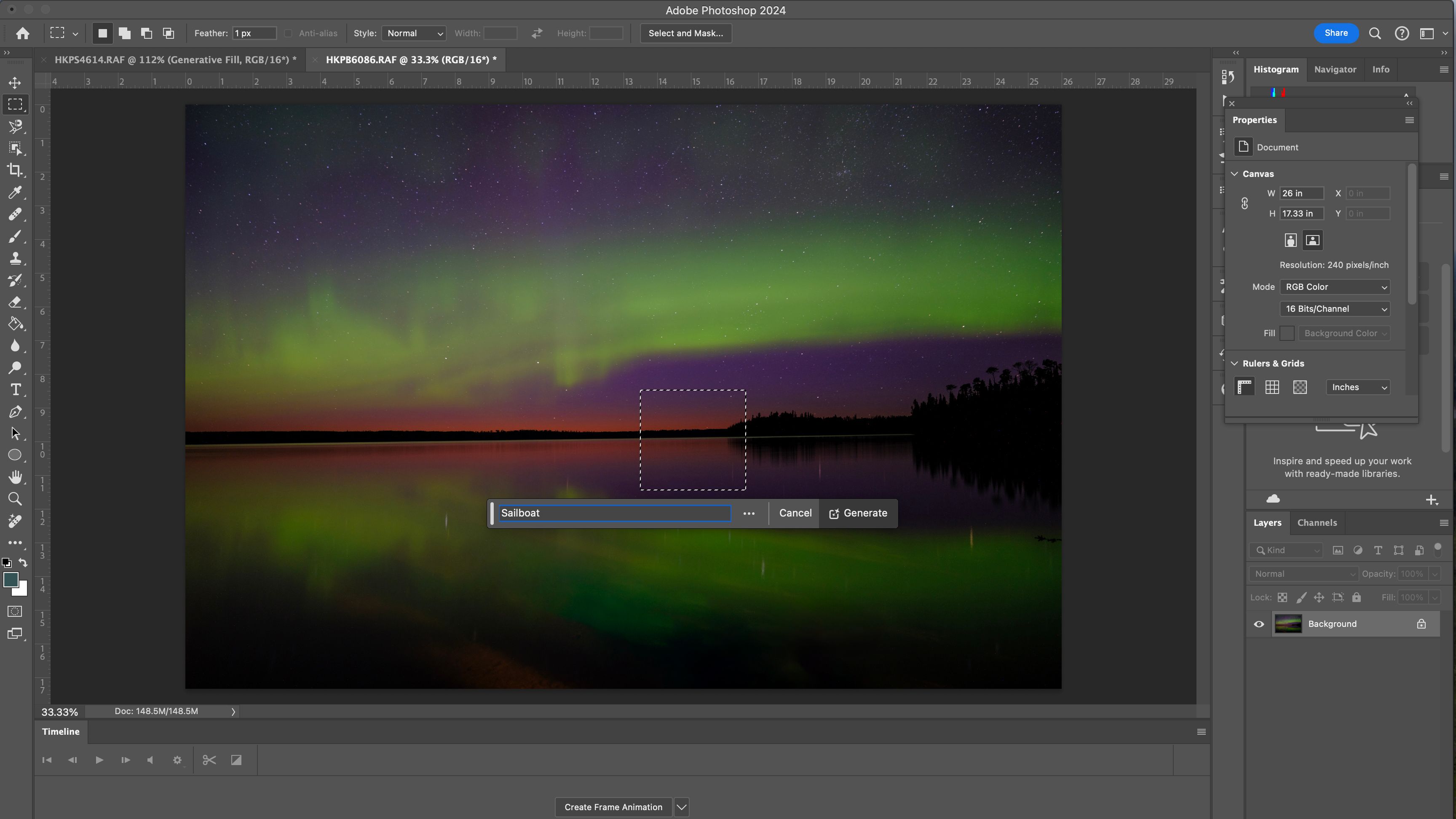

Whereas the adoption of safeguards by AI firms and social media platforms is vital, the opposite piece of the equation is participation by the artists themselves. The Content material Authenticity Initiative is working to create a watermark that not solely retains the artist’s identify and correct credit score intact, but additionally particulars if AI was used within the creation. Adobe’s Content material Credentials is a complicated, invisible watermark that labels who created a picture and whether or not or not AI or Photoshop was utilized in its creation. The information then could be learn by the Content Credentials Chrome extension, with a web app expected to launch next year. These Content material Credentials work even when somebody takes a screenshot of the picture, whereas Adobe can be engaged on utilizing this device to stop an artist’s work from getting used to coach AI.

Adobe says

that it solely makes use of licensed content material from Adobe Inventory and the general public area to coach Firefly, however is constructing a device to dam different AI firms from utilizing the picture as coaching.

The difficulty is twofold. First, whereas the Content material Authenticity Initiative was organized in 2019, Content material Credentials (the identify for that digital watermark) remains to be in beta. As a photographer, I now have the power to label my work with Content material Credentials in Photoshop, but the device remains to be in beta and the net device to learn such information isn’t anticipated to roll out till 2025. Photoshop has examined numerous generative AI instruments and launched them into the fully-fledged model since, however Content material Credentials appear to be a slower rollout.

Second, content material credentials gained’t work if the artist doesn’t take part. At present, content material credentials are elective and artists can select whether or not or to not add this information. The device’s skill to assist forestall scarping the picture to be educated as AI and the power to maintain the artist’s identify connected to the picture are good incentives, however the device doesn’t but appear to be extensively used. If the artist doesn’t use content material credentials, then the detection device will merely present “no content material credentials discovered.” That doesn’t imply that the picture in query is AI, it merely implies that the artist didn’t select to take part within the labeling function. For instance, I get the identical “no credentials” message when viewing the Hurricane Helene images taken by Related Press photographers as I do when viewing the viral AI-generated hurricane woman and her equally generated pet.

Whereas I do imagine that the rollout of content material credentials is a snail’s tempo in comparison with the speedy deployment of AI, I nonetheless imagine that it may very well be key to a future the place generated pictures are correctly labeled and simply acknowledged.

The safeguards to stop the misuse of generative AI are beginning to trickle out and present promise. However these techniques will have to be developed at a a lot wider tempo, adopted by a wider vary of artists and know-how firms, and developed in a manner that makes them straightforward for anybody to make use of as a way to make the largest influence within the AI period.

Associated

I asked Spotify AI to give me a Halloween party playlist. Here’s how it went

Spotify AI cooked up a creepy Halloween playlist for me.

Trending Merchandise

Acer Nitro 31.5″ FHD 1920 x 1080 1500R Curved PC Gaming Monitor | AMD FreeSync⢠Premium | Up to 165Hz Refresh | 1ms VRB | VESA Mountable | 1 x Display Port 1.2 & 2 x HDMI 1.4 | EDA320Q PBIIPX

HP 17.3″ FHD Essential Business Laptop, 32GB DDR4 RAM, 1TB PCIe SSD, Intel 12th Gen 6-Core i3 Processor (Up to 4.4GHz,Beat i5-1155G7), Bluetooth, Webcam, Windows 11 Pro, Silver

Acer KB272 EBI 27″ IPS Full HD (1920 x 1080) Zero-Frame Gaming Office Monitor | AMD FreeSync Technology | Up to 100Hz Refresh | 1ms (VRB) | Low Blue Light | Tilt | HDMI & VGA Ports,Black

Wireless Keyboard and Mouse, Ergonomic Keyboard Mouse – RGB Backlit, Rechargeable, Quiet, with Phone Holder, Wrist Rest, Lighted Mac Keyboard and Mouse Combo, for Mac, Windows, Laptop, PC

View 270 Plus TG ARGB Black Mid Tower E-ATX Case; 3x120mm ARGB Fans Included; Support Up to 360mm Radiator; Front & Side Dual Tempered Glass Panel; CA-1Y7-00M1WN-01; 3 Year Warranty

ASUS Vivobook Go 15.6â FHD Laptop, AMD Ryzen 3 7320U, 8GB, 128GB, Windows 11 Home, Mixed Black, E1504FA-AS33

ViewSonic VA2447-MH 24 Inch Full HD 1080p Monitor with Extremely-Skinny Bezel, Adaptive Sync, 75Hz, Eye Care, and HDMI, VGA Inputs for Residence and Workplace

Antec NX410 ATX Mid-Tower Case, Tempered Glass Side Panel, Full Side View, Pre-Installed 2 x 140mm in Front & 1 x 120 mm ARGB Fans in Rear (White) (9734088000)

Lenovo Newest 15.6″” Laptop, 16GB RAM, 1TB SSD Storage, 15.6″” FHD (1920 x 1080) Display, Ethernet Port, HDMI, USB-C, WiFi & Bluetooth, Intel Dual-core Processor, Windows 11 Home, Black